|

||||||

|

||||||

| HOME |

||||||

|

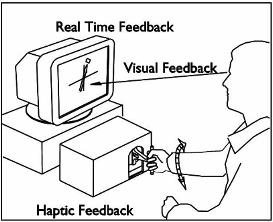

The use

of a programmable virtual

environment combined with a

motorized manual interface allows a unique opportunity to study the

nature of human

motor adaptation. We theorize that

humans make use of percepts of force and motion in order to accomplish

goal

directed action. In virtual

environments, novel feedback conditions and dynamic interactions may be

devised

that can work with or against the expectations of the human operator. By controlling these conditions in human

subject experiments, we would like to discover what features of a

mechanical

interaction influences the performance and learning of manual skills. In the

manual control of an object, interaction forces are not random but

directly relate to the kinematics and object properties. In such

situations with predictable behavior, the human motor system may be

able to form a task appropriate strategy with a simple computational

structure. In the current study,

we examine

differences

between human motor adaptation responses to changes in movement

specification versus object parameters. For this purpose, we chose a

motor task where interaction with an external inertia is the cause of

forces between the arm and an environment. The task is relevant to the

study of human movement in that it requires adaptation to force

perturbations, and yet the kinds of interactions are familiar to

humans. We hypothesize that the human motor system controls the motion

of external objects using an internal representation of the object that

is distinct from the representation of the motion plan.

|

|

|

|

|

| Huang,

Felix Gillespie, R. Brent |

|

| |

|

| |

|

| |

|

| Power and Information Transfer with

Haptic Feedback Upper Extremity Stroke Rehabilitation |

|

| |

|

| Adaptation

to Object versus Task Specifications |

|

| Project Sponsors | |

| |

|

| Rehabilitation Institute of Chicago, R24 | |